One morning you find out your favorite Airflow DAG did not ran that night. Sad…

Six months later the task ran twice and now you understand: you scheduled your DAG timezone aware and the clock goes back and forth sometimes because of Daylight Saving Time.

For example, in Central European Time (CET) on Sunday 29 March 2020, 02:00, the clocks were turned from “local standard time” forward 1 hour to 03:00:00 “local daylight time”. And recently, on Sunday 25 October 2020, 03:00 the clocks were turned from “local daylight time” backward 1 hour to “local standard time” instead. That means that any activity between 02:00 and 03:00 will not exist, or exists twice.

Does Airflow REALLY behave like this?

Spoiler: luckily it does not :)

This is our simple timezone aware DAG with a DummyOperator that starts before and ends after March 29th 2020:

from datetime import datetime

from airflow import DAG

from airflow.operators.dummy_operator import DummyOperator

import pendulum

local_tz = pendulum.timezone("Europe/Amsterdam")

default_args=dict(

start_date=datetime(2020, 3, 28, tzinfo=local_tz),

end_date=datetime(2020, 4, 1, tzinfo=local_tz),

owner='joost.dobken'

)

with DAG('check_daylight_savings', schedule_interval='42 2 * * *', default_args=default_args) as dag:

DummyOperator(task_id='dummy', dag=dag)

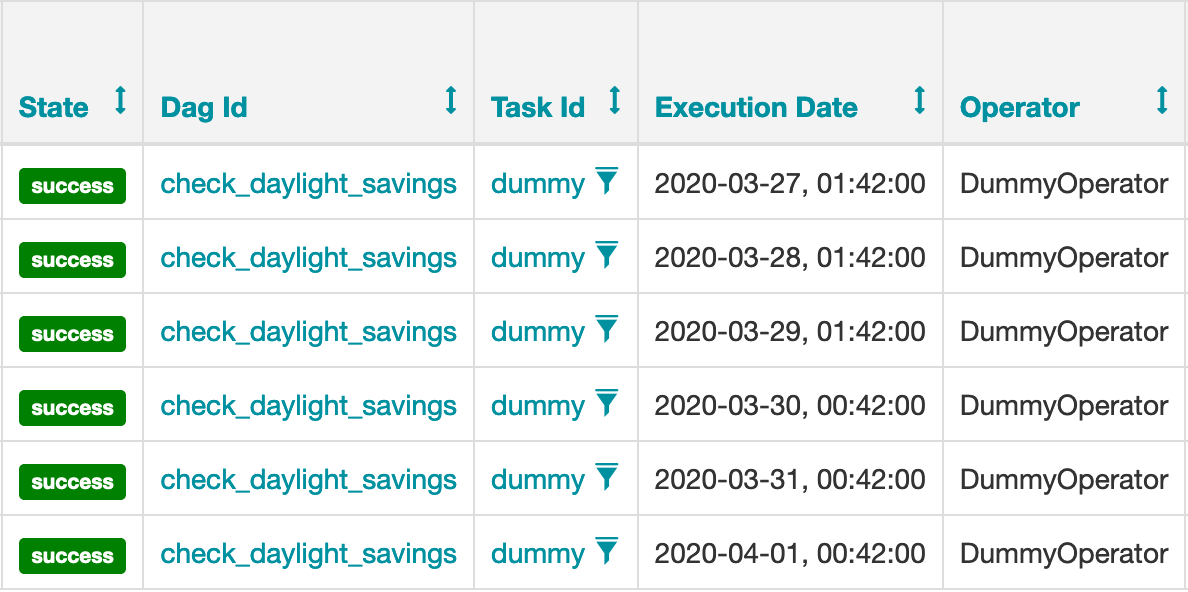

So if our hypothesis is true, we expect a missing task on the 29th. After running the tasks, we query on the task instances. You can see the shift of one hour in execution time, but the task is really there!

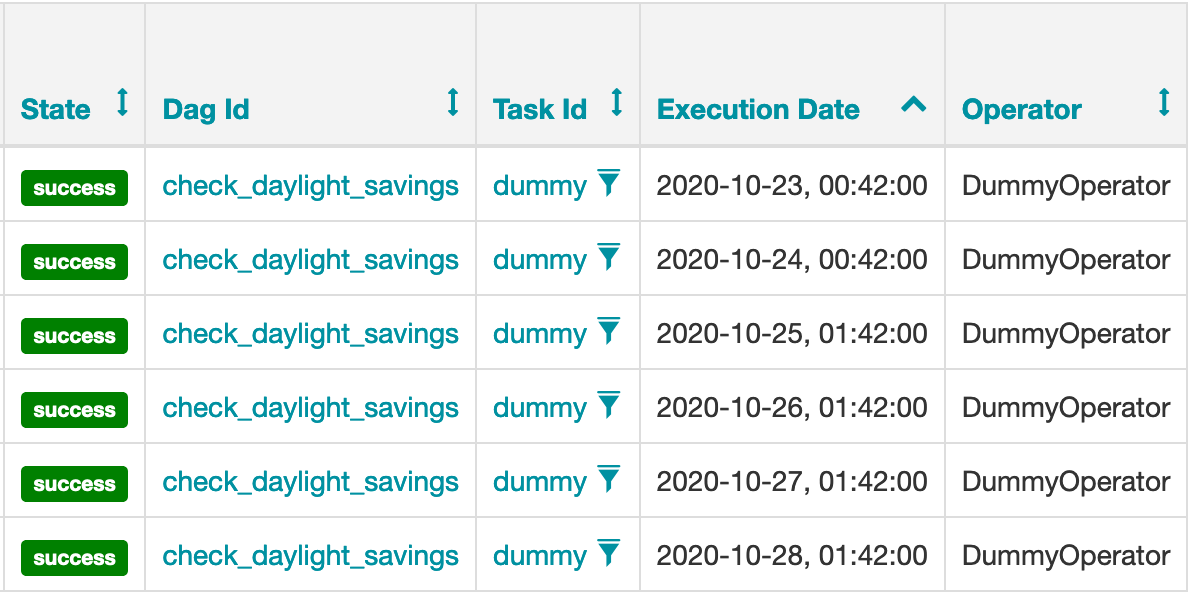

Maybe the script will run twice when we go back to standard time. So we change the dag to start and end before and after October 25th 2020 and query the task instances again:

23, 24, 25, 26, 27 and 28… a clear sky

Fortunately, the task is there only once! Support for Pendulum (instead of the less stable pytz) is built-in Airflow 1.10 and I checked with version 1.10.12. Working with different time zones can be really complicated; thats why we simply use the code, thank the people who dealed with this before us and never look at it again!